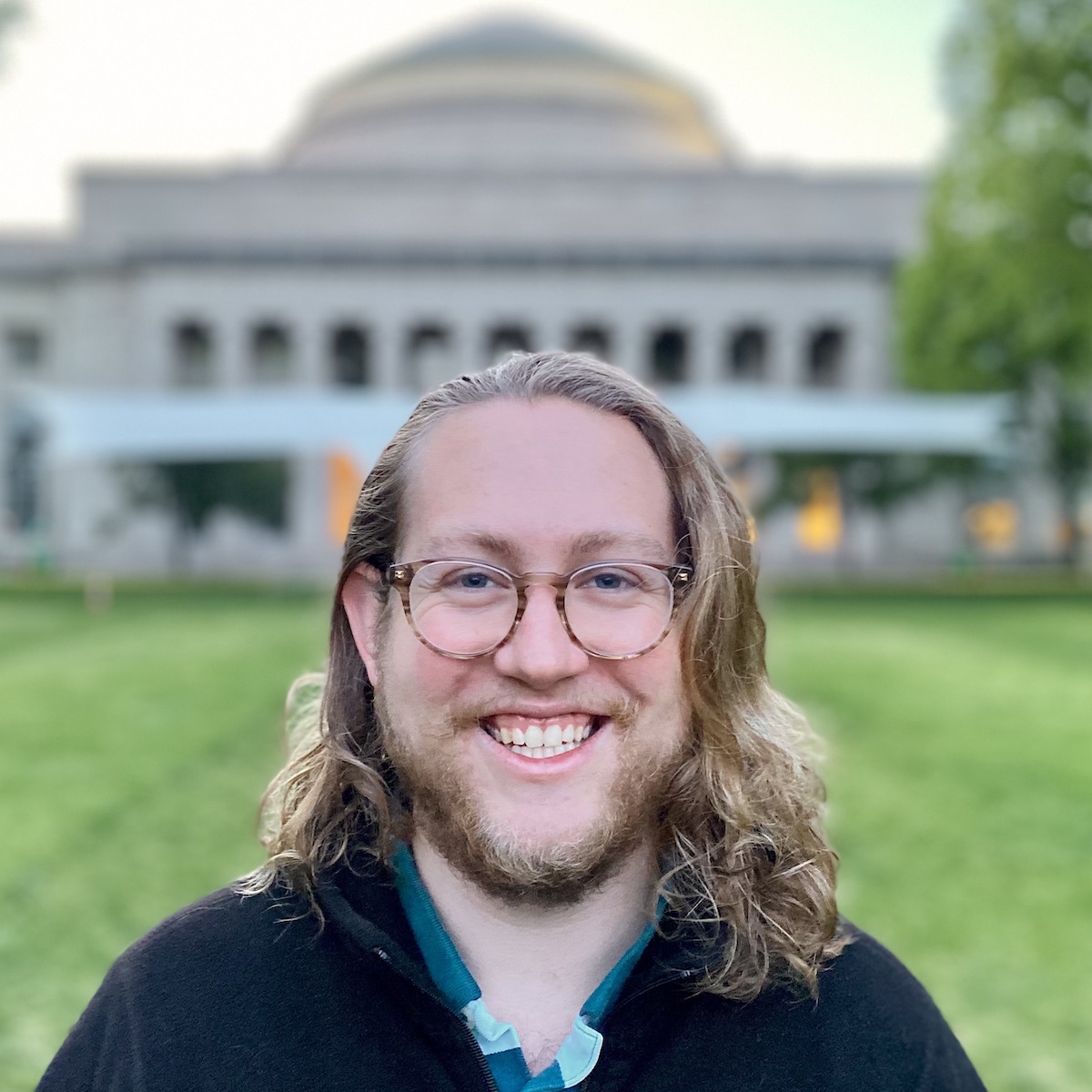

Research Profile

I’m a PhD student at MIT CSAIL co-advised by Jacob Andreas and Josh Tenenbaum.

I’m broadly interested in the interface between language and thinking. My research combines techniques from natural language processing, program synthesis, and classical symbolic methods. I’m working closely with researchers at MIT and other institutions to build a community around neurosymbolic programming.

My research is supported by an MIT Presidential Fellowship and the NSF Graduate Research Fellowship.

Selected Publications

Self-Steering Language Models.

Gabriel Grand, Joshua B. Tenenbaum, Vikash K. Mansinghka, Alexander K. Lew, Jacob Andreas. ArXiV: 2504.07081 (2025).

[arXiv]

[GitHub]

Featured on 🤗 Daily Papers

Syntactic and Semantic Control of Large Language Models via Sequential Monte Carlo.

João Loula, Benjamin LeBrun, Li Du, Ben Lipkin, Clemente Pasti, Gabriel Grand, Tianyu Liu, Yahya Emara, Marjorie Freedman, Jason Eisner, Ryan Cotterell, Vikash Mansinghka, Alexander K. Lew, Tim Vieira, Timothy J. O’Donnell. International Conference on Learning Representations (ICLR, 2025).

[OpenReview]

[GitHub]

Oral Talk, ICLR 2025

Stream of Search (SoS): Learning to Search in Language.

Kanishk Gandhi, Denise Lee, Gabriel Grand, Muxin Liu, Winson Cheng, Archit Sharma, Noah D. Goodman. Conference on Language Models (COLM, 2024).

[arXiv]

[GitHub]

Oral Spotlight, COLM 2024

LILO: Learning Interpretable Libraries by Compressing and Documenting Code.

Gabriel Grand, Lionel Wong, Matthew Bowers, Theo X. Olausson, Muxin Liu, Joshua B. Tenenbaum, Jacob Andreas. ICLR (2024).

[arXiv]

[GitHub]

[Talk]

From Word Models to World Models: Translating from Natural Language to the Probabilistic Language of Thought.

Lionel Wong*, Gabriel Grand*, Alexander K. Lew, Noah D. Goodman, Vikash K. Mansinghka, Jacob Andreas, Joshua B. Tenenbaum. ArXiV: 2306.12672 (2023).

[arXiv]

[GitHub]

Sequential Monte Carlo Steering of Large Language Models using Probabilistic Programs.

Alexander K Lew, Tan Zhi-Xuan, Gabriel Grand, Vikash K Mansinghka. ArXiV: 2306.03081 (2023).

[arXiv]

[GitHub]

[Docs]

Identifying concept libraries from language about object structure.

Catherine Wong*, William P. McCarthy*, Gabriel Grand*, Yoni Friedman, Joshua B. Tenenbaum, Jacob Andreas, Robert D. Hawkins, and Judith E. Fan. CogSci (2022).

[arXiv]

[website]

[GitHub]

“Semantic projection” recovers rich human knowledge of multiple object features from word embeddings.

Gabriel Grand, Idan Blank, Francisco Pereira, and Evelina Fedorenko. Nature Human Behaviour (2022).

[Nature]

[MIT McGovern Institute]

[arXiv]

Milestones

🙏 04/2025: Self-Steering LMs preprint released! arXiv:2504.07081. I’ll be presenting this work at the 2025 New England NLP (NENLP) Meeting (Yale) as well as the AI Verification in the Wild (VerifAI) workshop at ICLR 2025 (Singapore).

⚓️ 12/2024: “A Llama Sunk my Battleship” presented at the workshop on System 2 Reasoning at Scale at NeurIPS 2024.

🔎 09/2024: Stream-of-search accepted for an oral spotlight presentation at COLM 2024!

🚀 05/2024: LILO presented at ICLR 2024! video link

🚀 10/2023: LILO preprint and code now released! arXiv:2310.19791

🎬 06/2023: Presented on “From Word Models to World Models” at the “LLMs meet CogSci” virtual townhall at CogSci 2023. Link to talk recording (15 mins)

🧠 06/2023: Gave a contributed talk with Lio Wong at SPP 2023 in Pittsburgh on our new work: From Word Models to World Models: Translating from Natural Language to the Probabilistic Language of Thought. arXiv:2306.12672

λ 01/2023: This week, I’m attending POPL 2023 here in Boston!

☀️ 06/2022: This summer, I’ll be attending the Neurosymbolic Summer School at Caltech as well as CogSci 2022 in Toronto.

🤖 05/2021: I will be starting my PhD at MIT EECS and CSAIL this fall! I’m thrilled to continue my research career under the co-mentorship of Jacob Andreas and Josh Tenenbaum.

🎓 04/2021: I am grateful to accept an NSF Graduate Research Fellowship in support of my PhD research.

Last updated on April 10, 2025